Academic integrity in the age of AI writing

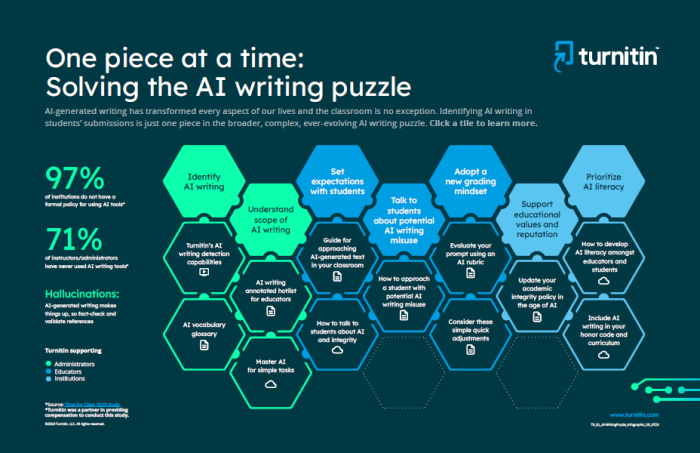

Over the years, academic integrity has been both supported and tested by technology. Today, educators are facing a new frontier with AI writing and ChatGPT.

Here at Turnitin, we believe that AI can be a positive force that, when used responsibly, has the potential to support and enhance the learning process. We also believe that equitable access to AI tools is vital, which is why we’re working with students and educators to develop technology that can support and enhance the learning process. However, it is important to acknowledge new challenges alongside the opportunities.

We recognise that for educators, there is a pressing and immediate need to know when and where AI and AI writing tools have been used by students. This is why we are now offering AI detection capabilities for educators in our products.

AI writing indicator keeps educators informed

Gain insights on how much of a student’s submission is authentic, human writing versus AI-generated from ChatGPT or other tools.

AI writing reporting with powerful results

Reporting identifies likely AI-written text and provides information educators need to determine their next course of action. We’ve designed our solution with educators, for educators.

Check student essays as you do today

AI writing detection complements Turnitin’s similarity checking workflow and is integrated with your LMS, providing a seamless, familiar experience.

Research corner

We regularly undertake internal research to ensure our AI writing detector stays accurate and up-to-date. If you are interested in what external testing has revealed about Turnitin's AI-writing detection capabilities, check out the links below. Notably, these studies position Turnitin among the foremost solutions in identifying AI-generated content within academia.

Research shows Turnitin's AI detector shows no statistically significant bias against English Language Learners

- In response to feedback from customers and papers claiming that AI writing detection tools are biased against writers whose first language is not English, Turnitin expanded its false positive evaluation to include writing samples of English Language Learners (ELL) and tested another nearly 2,000 writing samples of ELL writers.

- What Turnitin found was that in documents meeting the 300 word count requirement, ELL writers received a 0.014 false positive rate and native English writers received a 0.013.

- This means that there is no statistically significant bias against non-native English speakers.

Turnitin’s AI writing detector identified as the most accurate out of 16 detectors tested

- Two of the 16 detectors, Turnitin and Copyleaks, correctly identified the AI- or human-generated status of all 126 documents, with no incorrect or uncertain responses.

- Three AI text detectors – Turnitin, Originality, and Copyleaks, – have very high accuracy with all three sets of documents examined for this study: GPT-3.5 papers, GPT-4 papers, and human-generated papers.

- Of the top three detectors identified in this investigation, Turnitin achieved very high accuracy in all five previous evaluations. Copyleaks, included in four earlier analyses, performed well in three of them.